The Case for Brakes

Now is the winter of our discontent

One of my favorite Reddit zingers was in response to a question about a pickup truck's towing capacity. The answer was something along the lines of, “No one cares how much weight your truck can pull; what matters is if you can stop the weight.”

The point is that brakes are not as important if everything is going well. But as soon as the situation deteriorates, the theoretical idea of brakes becomes increasingly relevant, as does the question of whether the vehicle’s brakes are sufficient for the speed and weight.

I’d like to crack open the idea that AI is different from other technologies and should not be handled as we have historically treated novel technological developments.

It isn’t a great time.

Leading up to the 2022 ChatGPT 3.5 release, we had been through a lot as a society. On June 29th, 2007, Apple released the first smartphone1. There is no shortage of popular commentary about how much it has changed the world. Instagram was released in 2010, and TikTok in 2017. The COVID pandemic started in 2020, and OpenAI released ChatGPT 3.5 in November 2022. It had been a busy 15 years.

None of these technological developments has had a net positive effect on society. The polarizing echo chamber of Facebook drove us apart. Smartphones made it easier to stay distant. Instagram gave us an addictive way to compare our lives to everyone else, and TikTok reinforced the idea that monetizable attention span could be measured in seconds. The forced isolation from the COVID lockdowns is still a raw subject for many people.

Mentally, we aren’t in a good place. Only 46% of adults are literate at a 6th-grade level2, and childhood literacy and numeracy tanked after the COVID lockdowns3. Everyone talks about the rise in polarization4, but there is more to the story than just Americans and their partisan anger. We seem to have lost the ability to discuss nuanced issues and mentally hold multiple ideas or perspectives, which is the key to compromise and balance in civil discourse.

Our present situation has forced us to invent new descriptive terms for our poor mental state, such as brain rot5, the Google Effect6, Zombie Scrolling7, and TikTok Brain8. This seems quite serious.

In the middle of this polarized and illiterate state of societal cognitive decline, we are speedily adopting, on a wide scale, a tool that is designed to push us to offload our cognitive abilities. It doesn’t seem wise.

What we are doing is NOT unprecedented. We launched into a society-wide adoption of social media not too long ago, and we still have gaps in our understanding of the downstream consequences. Twenty-two years and 3+ billion monthly active users later, we are just now litigating the negative effects of Facebook at the Supreme Court9. Our society adopted the smartphone 19 years ago, and we are still in the thick of sorting out the mess we’ve made for ourselves.

Even if all of the utopian promises of AI eventually come true, there is an easy case to make that we should use extreme caution around any tool that could take a sledgehammer to our teetering mental and societal state, especially if we’re forecasting 10% - 25% unemployment rates. We have already pushed AI into schools10, colleges11, and the workplace12 with disastrous results13. At a minimum, the public release of this technology should be highly nuanced, for adults only, and with great care towards ensuring that the end user understands the risks associated with using the product.

With great power comes great…

As I introduced in an earlier essay, one way to understand AI is to see it as widely used software distributed by a Silicon Valley startup. AI is accessed via the cloud, which runs on servers in data centers. AI isn’t magic; it’s a software program written with code that works with large data sets. And when you use ChatGPT or Claude, you aren’t interacting directly with the LLM; you are interacting with an app on your phone that technically isn’t much different from any other app.

All software companies are subject to security vulnerabilities, bugs, outages, and hacks. Equifax lost the Social Security numbers of 147 million Americans14. The 2021 Facebook outage is significant enough to have its own Wikipedia page15. Google just announced a high-risk security update for its Chrome browser that affects 3.5 billion users16.

Popular AI tools have already experienced outages and security vulnerabilities, and that trend will continue. The threat model for AI is quite significant, not least because we are plugging it into so many devices, mobile apps, and browsers.

Regular users of AI share their entire lives with the chatbot in the questions that they ask. I’ve personally had the horrifying experience of video calls with people where they accidentally share their ChatGPT chat history; it is incredibly personal information. For those that don’t interact with AI in that way, imagine sharing your Google search history with someone, with questions from “what is a good local restaurant” to “how do I do this thing at my job” to “what is this skin issue on my leg”. This is incredibly private and sensitive data, and ChatGPT has already lost it once17.

In addition to privacy, it has become a meme in the cybersecurity community that we have spent years emphasizing to everyone how important it is to lock down your laptop permissions, hide your passwords, and take extreme caution when installing programs on your laptop. And now, many people have freely handed the keys to their entire digital ecosystem to an incredibly powerful tool.

The unique threat model for AI is that we are trusting it with very sensitive business data AND personal data, and unauthorized access to someone’s ChatGPT data becomes a single point of failure for both worlds. The security risks are compound, complex, and concentrated. There is another layer of risk in AI misalignment, but I’ll leave that for an upcoming article.

“It’s the economy, stupid.”

The question, “Will AI take away human jobs?” is incredibly difficult to answer. To a certain extent, it doesn’t matter if AI could theoretically replace your job; what matters is if your boss thinks that AI can get close enough. Some layoffs, specifically in customer service, can be attributed to this lowering of the bar to an LLM’s level. We are seeing a staggering 35% drop in entry-level white-collar job openings18, as many companies decide to wait and see if AI can replace those roles. While AI is not actually replacing humans in the workforce, it is having a tremendous negative impact.

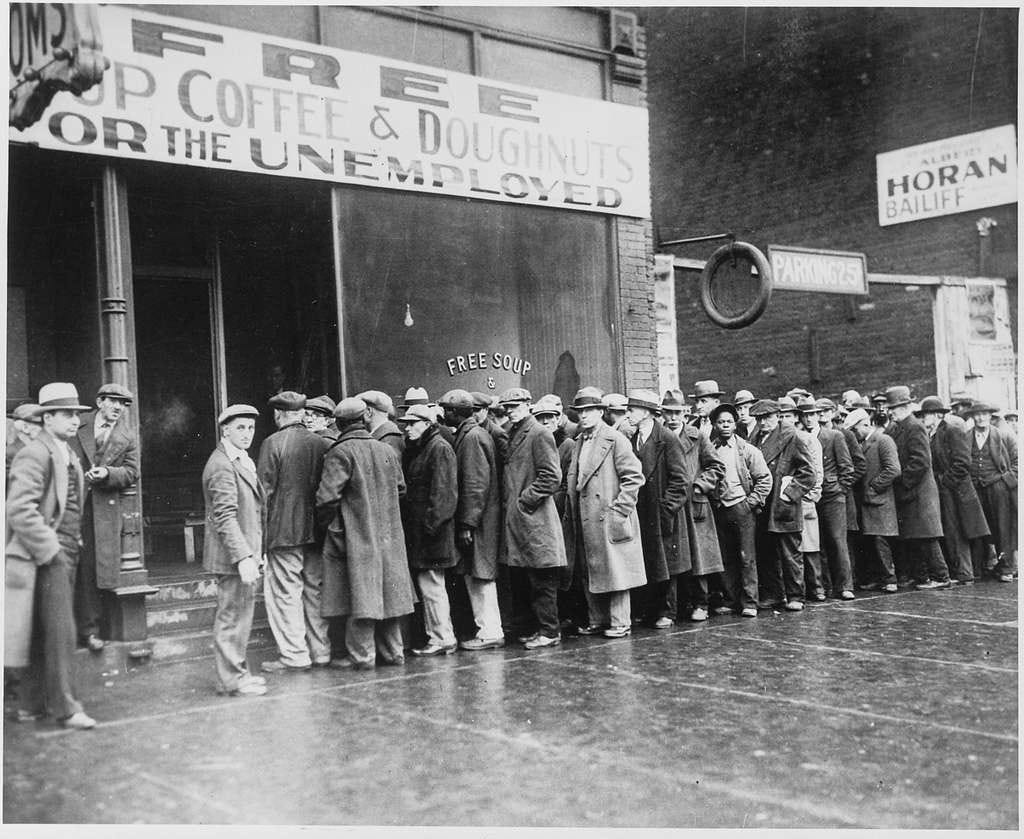

The CEOs in the AI world have repeatedly claimed that AI will cause between 10% and 25% unemployment, which would put us economically somewhere between the 2008 financial crisis19 and the Great Depression20. These are not trivial numbers. Have we thought through the economic and societal ramifications? Why are we allowing companies to go down this path if these are their acknowledged consequences?

In many ways, the business models of Anthropic, OpenAI, xAi, and the other major players are completely unsurprising to anyone who has spent any time around software startups. They’re not cashflow positive, they’re subsidizing their initial customers, and they’re building contracts on promises, hopes, and visions. This is not new. What is unusual is how much of the stock market is now directly tied to the success of these companies. By some estimates, 47% of the S&P 500 is directly tied to AI,21 and AI drives a full third of the entire US stock market22. That is a tremendous amount of economic exposure to a limited set of start-ups, an investment that has been historically classified as higher risk.

If we are in an economic bubble, I certainly don’t want to see it popped. But I think it would behoove us to stop inflating the bubble until we have put a bit more analysis on the economic risks of our current path.

The path forward

As I have outlined above, there are some real risks with continuing the course of adopting and integrating AI. Ignoring the issues won’t make them go away.

Start the conversation in your circles. If you find a thought-provoking or contrarian piece on AI, share it and use it as a conversation starter with family, friends, and coworkers. Society isn’t asking the right questions regarding AI and technology, so start the conversation. Ask if AI has been a net positive or net negative thus far for work, school, and the home. There is publicly available data on the effects thus far, but we need to move it into public discourse.

But don’t just argue about the negative path of technology; take the positive path in the opposite direction and encourage others to do the same. Read physical books, take phone-free walks, and put away devices at mealtimes. Get acquainted with cookbooks, dictionaries, and a journal. Spend time writing out your ideal relationship with your smartphone, then delete smartphone apps to match your intent. Turn off notifications and turn the screen to gray-scale to make the phone less addictive. Instead of asking Google or ChatGPT, call or text a friend.

Familiarize yourself with doing things the old-fashioned analog way. Accustom yourself to being without your smartphone for a few hours at a time. Listen to the birds and count the varieties of plants in your area. Try to minimize the sources of technological distraction in your life. Become aware of how many times you pick up your phone, and what you’re seeking in that moment, whether it is a distraction or something else. Move towards physical reality and away from digital reality.

It will take many individual conversations and societal decisions to stop the AI train. But in the meantime, you can personally step off the train.

Photo captions are from the song “Hotel California” by The Eagles

Photo Credits

Photo by Quintin Gellar: https://www.pexels.com/photo/red-and-silver-truck-on-asphalt-road-6574072/

Photo by Robert So: https://www.pexels.com/photo/group-of-women-busy-with-their-smatphones-in-the-park-16654339/

Photo by Panumas Nikhomkhai: https://www.pexels.com/photo/light-on-computer-17489153/

Unemployed men queued outside a depression soup kitchen opened in Chicago by Al Capone, 02-1931 - NARA - 541927

The iPhone wasn’t actually the first smartphone, but it was for almost everyone. This was technically the first smartphone.